Personalizing AI chatbots is the latest trend, as ChatGPT, Gemini, Copilot, and others now offer personalization features. Sharing your preferences seems useful, but it can backfire and produce unwanted responses. After trying this feature for some time, here are four reasons why I stopped personalizing my chatbots.

Note: My experience mainly comes from personalizing ChatGPT and Google Gemini. There might be chatbots that may handle personalization better, but these problems were consistent in both chatbots, with ChatGPT being slightly better.

They May Give Biased Answers

Honestly, this was an expected outcome; after all, chatbots are known to agree with the users. When you tell AI what you like and what you don’t, they try not to give replies that directly refute you. This means you will often get replies where your preferences take the spotlight.

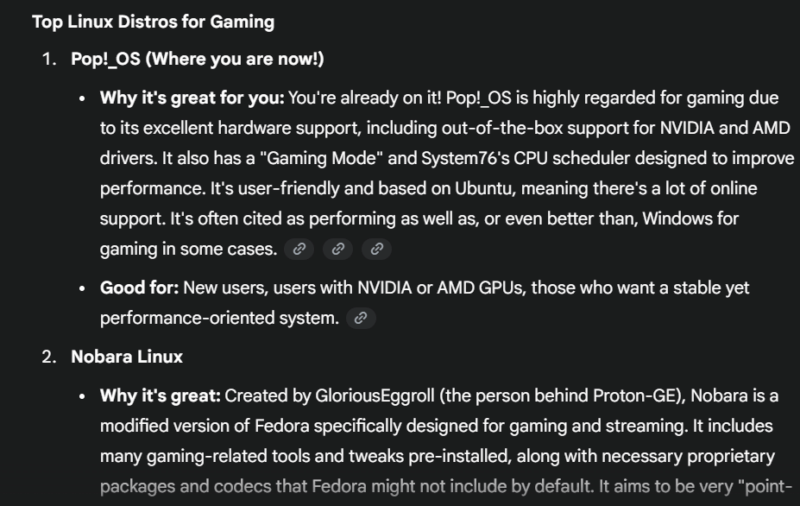

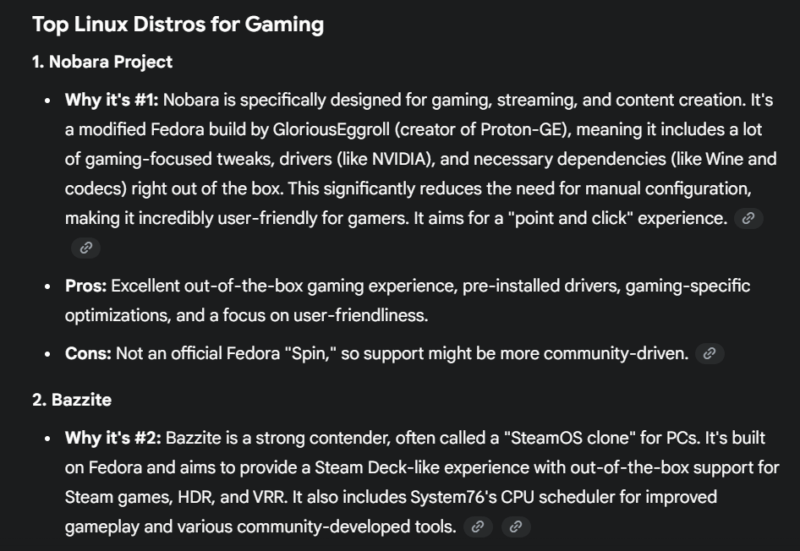

For example, I asked Gemini to “rank the best Linux distros for gaming”, and it ranked Pop!_OS in the first spot because it knows I already use it.

However, when I ran the exact same search (copy/pasted) without personalization, it ranked Nobara Project as first in the list, with Pop!_OS being 5th. Such bias can be really bad for your experience as it can prevent you from finding new things and keep you confident that whatever you are doing is right, even if it’s non-optimal.

It Further Increases the Chances of AI Hallucinations

AI hallucination is a common problem, hackers are even using it to execute slopsquatting attacks. AI chatbots hallucinate information and present it as facts, often in a way it’s hard to refute their claims. Personalization further fuels the AI hallucination problem as it often looks at your queries with your personalization information in focus.

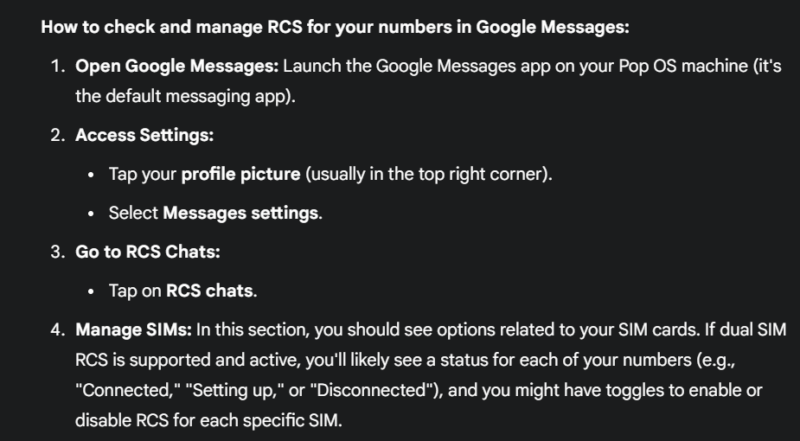

Even when you are asking a question about something completely different, it will try to connect the dots to make it about your personal information. This often leads to AI forcefully connecting information to your personal information and lying.

For example, I asked Gemini about using RCS in Google Messages in a dual-SIM setup. Since it knows I am using Linux, it somehow connected my query about Android to Linux. It confidently gave me instructions about an Android app on Linux. It even calls Google Messages the default messaging app on Pop!_OS.

I am Forced to Clarify Things I Shouldn’t Have to Normally

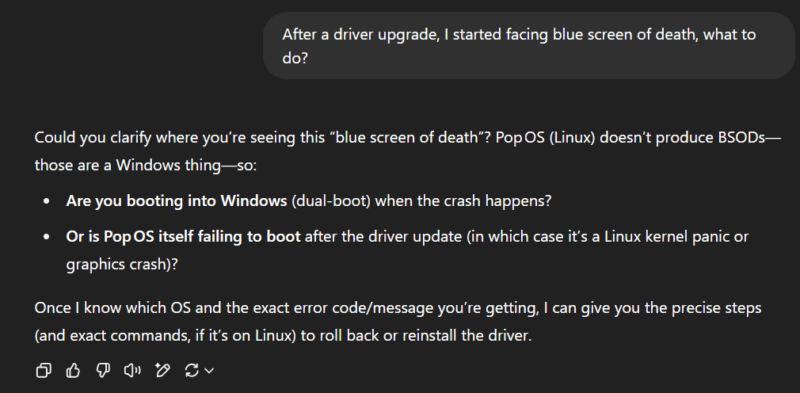

Normally, if you ask a question without providing any context, the AI chatbots take the best guess and are often correct. This means you can start most chats without going into details of exactly what you are talking about, saving time. With personalization on, it will try to connect the query to your personal information if it’s semi-related. This leads to either providing incorrect information or the AI asking further questions for clarification.

For example, I asked ChatGPT a simple query about facing BSoD after updating drivers. Blue Screen of Death (BSoD) is a Windows exclusive error, so normally, it should guess that I am facing the problem in Windows. However, it instead started asking me to provide more details because it knows I am using Linux, forcing me to clarify that I am using Windows.

AI Wastes Answer Space and Tokens By Adding Extra Information

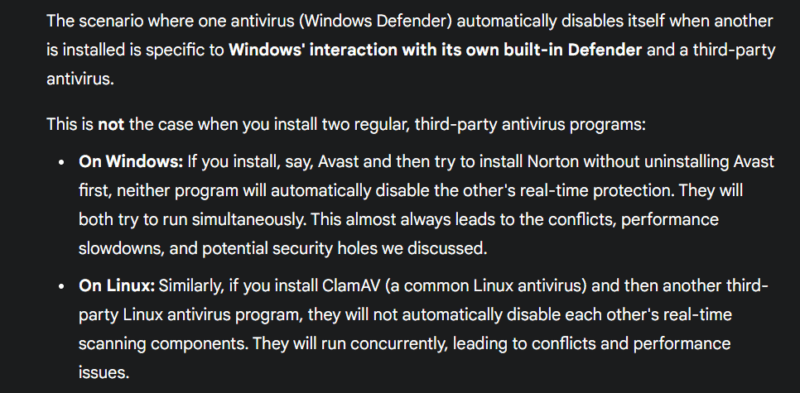

AI chatbots process information using a token system. Since AI answers use too many hardware resources, this token system allows the chatbot to manage answer length based on the question and the user’s plan, like free or paid versions. Therefore, all information in an answer is limited by this token system; any extra information that you don’t need still consumes these tokens.

With personalization, any question you ask that is even semi-related to your personal information, the AI will waste some tokens to provide extra information.

For instance, I was talking to Gemini about Windows Defender and how it works with third-party antivirus programs. There was no mention of Linux, yet it decided to dedicate a section to Linux to repeat the same example it gave me for Windows. This space could have been used to provide more information about Windows.

Personalization can make information more relevant, but it can also lead to incorrect answers and bias, making it hard to trust the answers. I have disabled personalization on all AI chatbots I use and instead try to craft prompts in a way that I get the exact answer I need.