Every few weeks, another tech company slips a new clause into its privacy policy. The message is always the same: “We are using your data to train AI, unless you stop us.” That’s not consent, but exhaustion disguised as choice.

This quiet shift is what many call opt-out fatigue, a form of digital weariness that’s become central to the digital age. It’s no longer enough to use the internet simply. You also have to defend your right not to have your data fed to the machines that run it.

In this new reality, the idea of AI data privacy opt-out has become a test of how much control we still have over our digital lives.

How Default Opt-Ins Became the Industry Standard

The rise of generative AI has pushed companies to hoard vast amounts of user data for training. What started as opt-in experiments has turned into a widespread default practice. They’ve normalized a world where “yes” to user data is automatic and “no” takes workarounds.

LinkedIn’s AI data grab, for instance, auto-includes user posts, comments, and profile data in AI model training. This gives Microsoft access to billions of data points despite the company claiming data anonymity. While it’s possible to opt out after navigating layers of menus, the default settings assume consent without asking.

Meta does the same. Its Llama models train on public user content from Facebook and Instagram by default. Even private chats can influence targeted ads, with no simple toggle to stop it. Users end up deleting entire chats or seeking other workarounds to prevent Meta AI from using their chat data.

Google’s Gemini project allows AI learning from YouTube activity, search history, and even your Gems, unless you dig through privacy options to turn it off. Insights into why Google is letting you share Gemini Gems show how the system is framed as collaborative while quietly expanding data access.

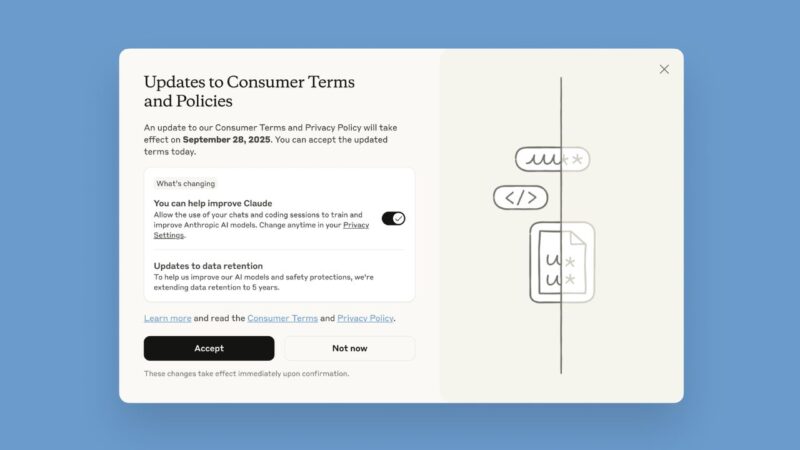

Anthropic’s Claude chatbot made headlines with a policy update to retain chats for up to five years to train models unless users opt out by a deadline.

This is not accidental. Data is golden, and default opt-in keeps the data flowing without hassles. They exploit a simple truth that most users will never notice, and those who do rarely have the time or patience to change it.

Also, this system persists because privacy laws in most regions were written for cookies and ads, not AI. Regulators are always a few steps behind, giving companies time to normalize opt-out defaults before the rules catch up.

Why Opt-Out Systems Fail Users

The idea of choice in online privacy has become an illusion. Technically, you can opt out. Practically, few people ever do. Consent fatigue is the core issue, and this happens because we’re bombarded with so many decisions; so, we stop making them at all.

AI companies rely on that user fatigue. Each “we’ve updated our privacy policy” pop-up adds another layer of confusion. So, clicking “Accept” is no longer an agreement; it has become a habit.

A 2023 Pew study found that almost 80% of American users skip reading privacy policies because they find them too confusing or time-consuming. Companies are aware of this and design their products accordingly.

Even I’ve done it, skimming terms I knew I should read. These systems don’t need deception when exhaustion works just as well. They place the full burden of privacy on individuals, who must hunt through layers of settings to opt out.

For Claude, opting out stops future use but leaves past data in limbo for years. Similarly, Google’s system deletes history upon opt-out, forcing a choice between privacy and utility. And this is almost a similar situation across the board.

This dynamic mirrors other manipulative designs. We’ve seen similar patterns in consumer tech, like Samsung’s decision to push ads to smart appliances, where user control exists in theory but not in practice. The strategy is identical because they disguise coercion as convenience.

The Real Winners Behind Your Data

The AI data privacy opt-out debate isn’t just about privacy. It’s about profit and control. Behind the curtain, AI companies reap massive gains from this setup.

The global AI market hit $638 billion in 2024 and is projected to reach $1.8 trillion by 2030, per Semrush and Statista, with user data as a key driver for training models without licensing fees. For tech giants like Microsoft, Meta, Anthropic, and Google, user data is a goldmine.

LinkedIn’s integration with Azure and OpenAI, Meta’s global AI ambitions, and Google’s Gemini network all rely on continuous, large-scale data ingestion. The more content users produce, the smarter and more profitable the systems become.

This approach to AI data privacy opt-out keeps the data supply uninterrupted. Users generate the training material for free, while companies monetize it to build products that can automate, replicate, or replace human work.

Also, it creates a monopoly for the AI giants since the smaller AI firms can’t compete without similar data hoards.

The winners are clear: big AI companies create a cycle where better AI draws more users, yielding more data. Meanwhile, we get minimum benefits like smarter suggestions, but at the cost of privacy and autonomy. In the economy of AI, every user becomes both the product and the unpaid labour.

Fighting Back for Real Consent

Still, users are not powerless. Across Europe, privacy advocates are filing GDPR complaints to stop unauthorized AI data training. Article 21 of the GDPR grants citizens the right to object to personal data processing, and thousands have started invoking it.

Similar privacy laws are in full effect in places like India with the DPDP Act, China’s PIPL, and California’s Consumer Privacy Act. They’re all aimed at limiting tech companies’ data sourcing, processing, and AI training, with fines up to 4% of global turnover applied for violations.

In other regions, where federal privacy laws lag, vigilance is essential. Using self-defense strategies like browser-level privacy tools and disabling AI recommendations anytime they pop up works. Check more on ways to prevent AI chatbots from training on your data.

Immediately disable AI training features like LinkedIn’s opt-out options, Meta’s AI settings adjustments, ChatGPT’s “improve the model for everyone,” or Copilot’s privacy controls. Delete old chats to limit exposure and use temporary modes for sensitive queries.

The key insight is that collective action can shift norms. If we all play our role by opting out and voicing our concerns, companies will be forced to earn consent rather than assume it.

The Case for Opt-In by Choice

Vigilance on an individual basis is not the answer. Instead of being the exception, opt-in by choice ought to be the norm. By doing this, corporate overreach would be avoided and trust would be restored.

Informed consent would be ensured since users would voluntarily decide to share data. By making it more difficult to hoard data, this eliminates greed and promotes ethical sourcing, such as licensed datasets.

Adopting opt-in by choice would not slow innovations. Rather, companies might innovate in privacy tech, like better anonymization, to attract sharers. Proton’s Lumo chatbot already does this, and it could pave the way for better practices.

I’m not against AI; I write about technology every day. However, in this digital era, I support choice. Instead of attempting to take advantage of privacy, true innovation should respect it.

Awareness is Power

Default opt-in is not convenience, it’s control. The fight over AI data privacy opt-out policies is an attempt to own our digital selves and not just a technical argument.

Opt-out fatigue shows how these tech giants use exhaustion as a tool. They win when users stop trying. Hence, we must not give up that power, as users.

The more we normalize silent consent, the easier it becomes for them to act without permission. So, we must stay awake to this fact until our data privacy is prioritized.